Platform Tour

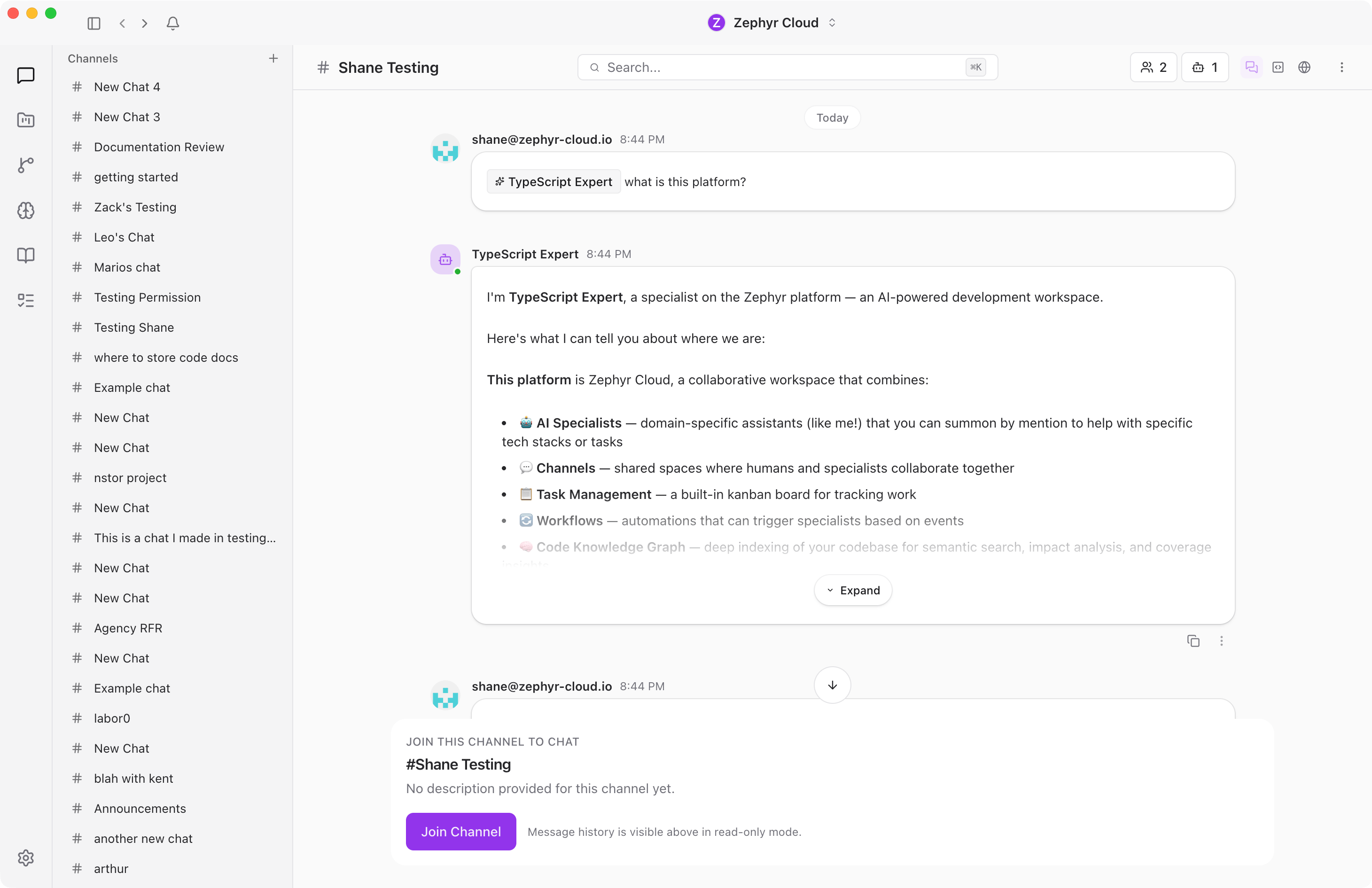

The AI Platform is a multi-agent workspace where your team collaborates with AI specialists in channels. Specialists carry defined roles, knowledge, tools, and benchmark history. You chat with them the same way you chat with teammates.

This page is a tour of every major surface in the app. If you want to start using it right away, head to Installation and come back here once you are signed in.

The Sidebar

The left side of the app has two areas: the icon rail and the channel list.

The icon rail is the narrow strip of icons on the far left. Each icon opens a different section of the platform — Chat, Projects, Workflows, Specialists, Knowledge Garden, Tasks, and Settings at the bottom.

The channel list sits next to the icon rail. It shows your channels — group conversations where you chat with your team and mention specialists with @ to get AI-powered responses. Create as many channels as you need for different topics. See Your First Channel for a walkthrough.

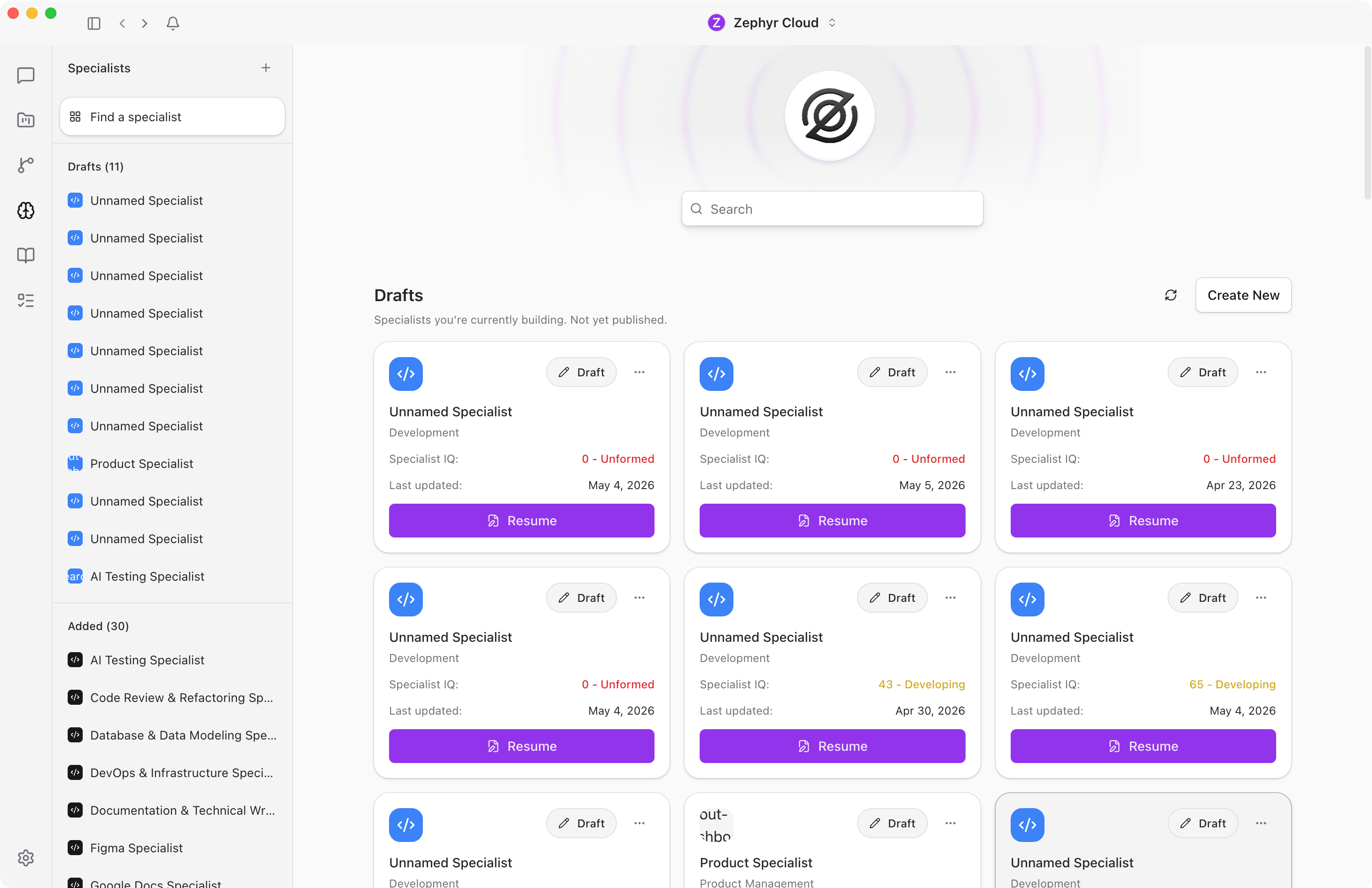

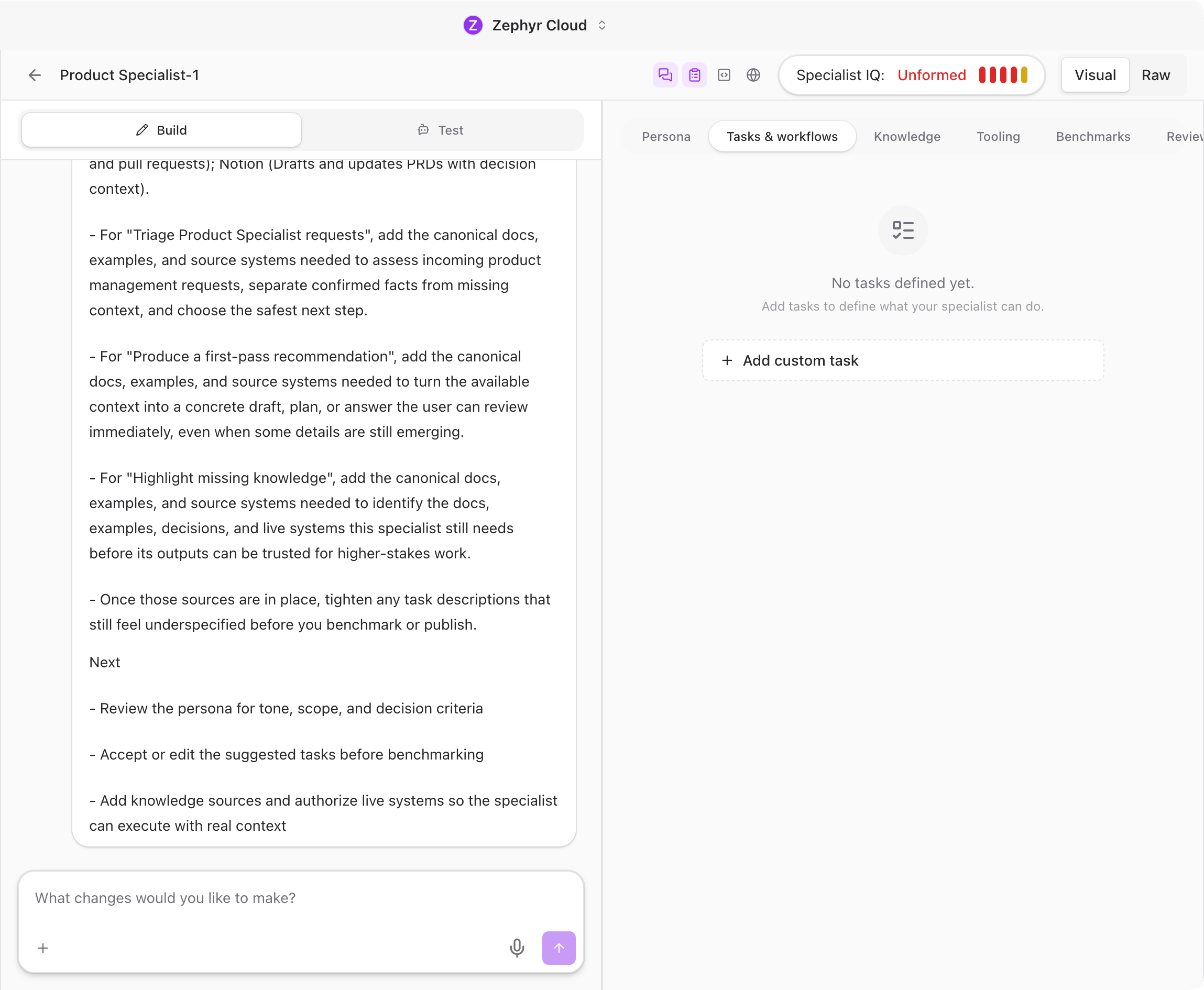

Specialists

Specialists are AI agents that carry a defined role, preferred models, a knowledge base, tool permissions, and benchmark history. When you ask a specialist to review code, it already has the context, tools, and instructions to do that job well.

Browse the marketplace to find pre-built specialists and add them to your workspace, or build your own using the specialist builder.

The builder lets you define a specialist's persona, tasks, knowledge sources, available tools, and benchmarks for evaluating its performance.

Mention a specialist with @ in a channel and it responds. Send a message without mentioning anyone and the platform routes it to the right specialist based on the content. See the Specialists guide for details.

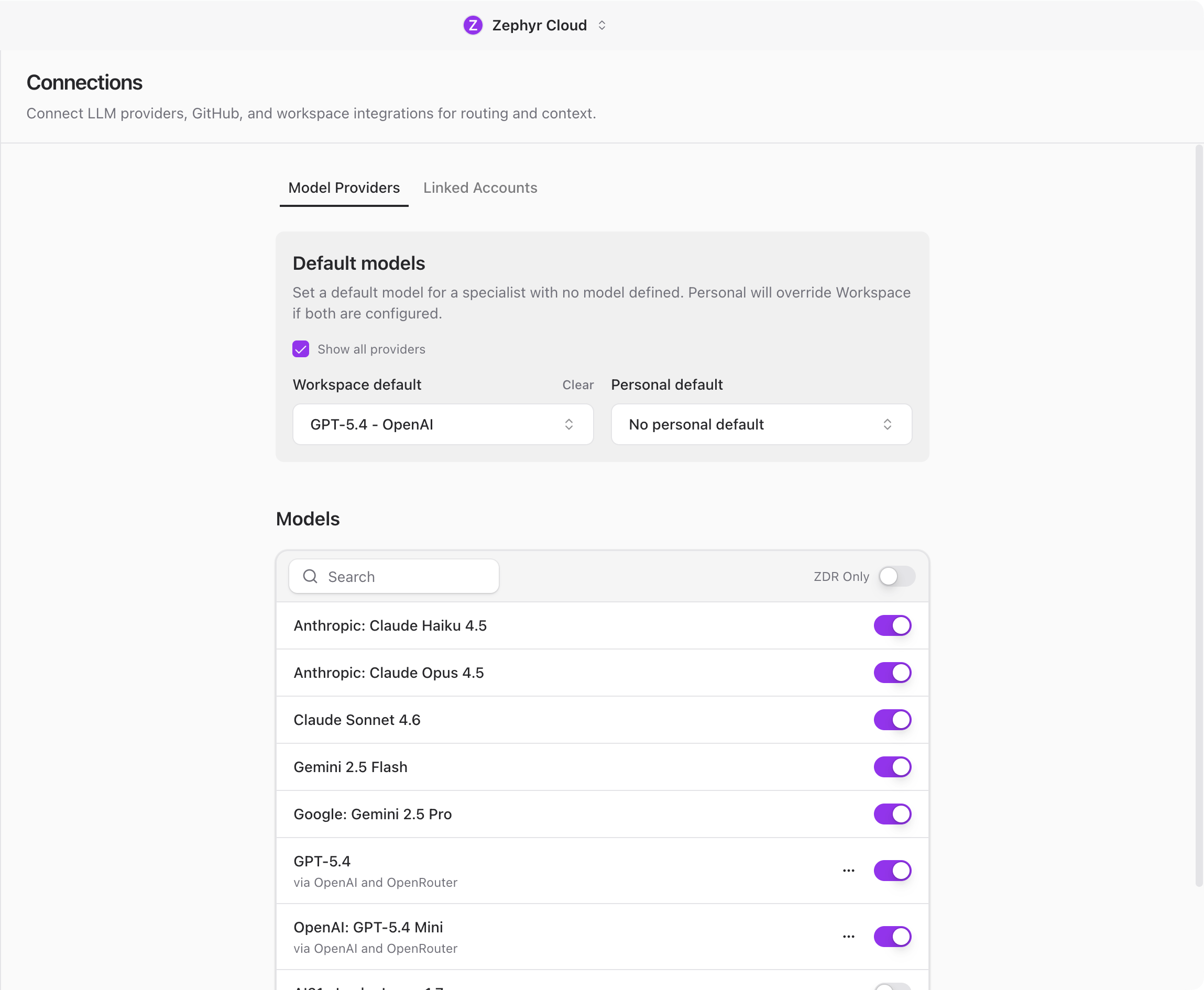

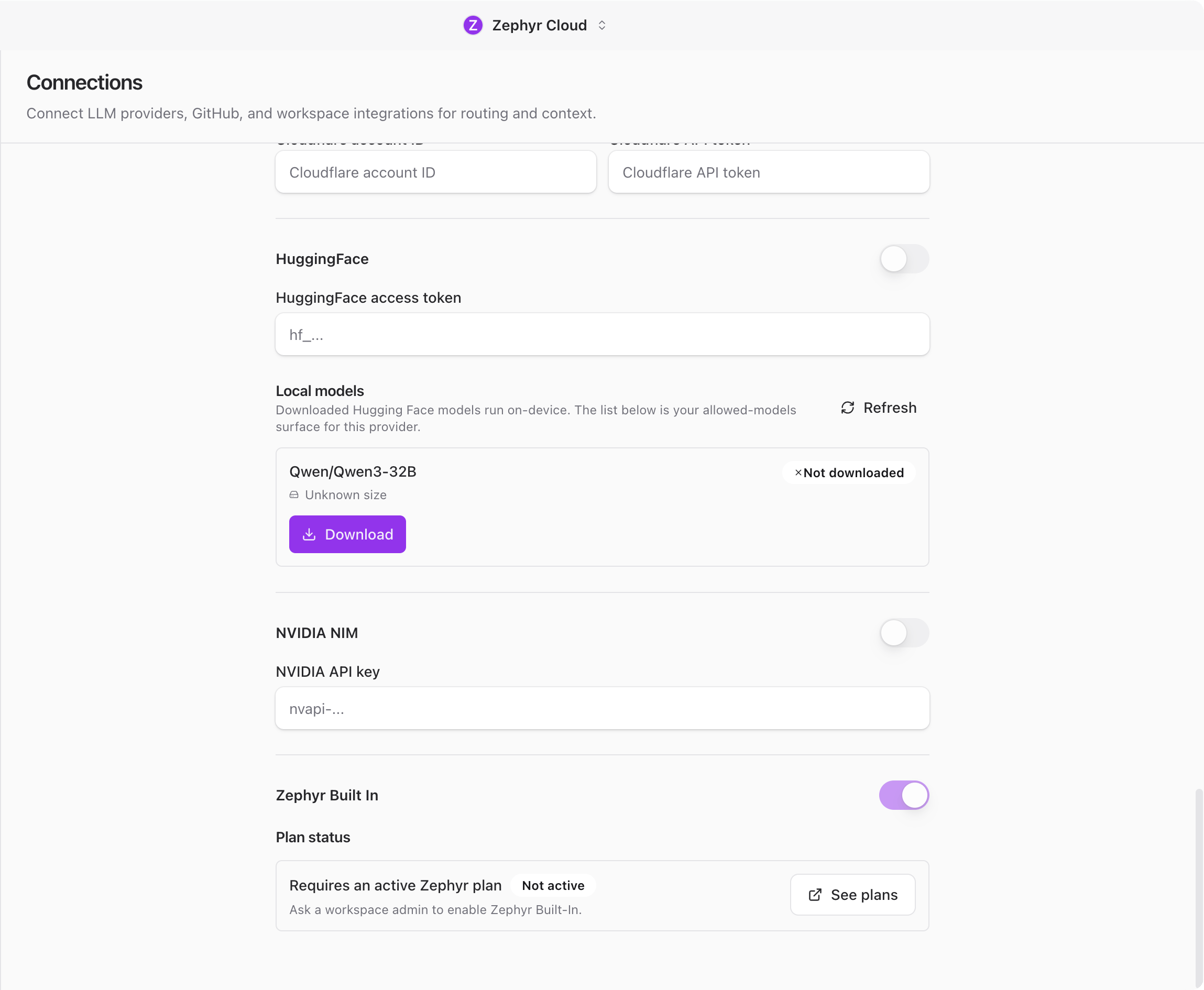

Model Routing

The AI Platform is provider-agnostic and model-agnostic. You can connect multiple LLM providers at the same time: OpenRouter, OpenAI, Anthropic, Amazon Bedrock, Cloudflare Workers AI, Hugging Face, or the Zephyr Built-in provider.

The platform picks the right model based on the specialist's configuration and your workspace defaults. You can set a workspace-wide default model, and individual team members can set a personal override. Specialists can also have their own preferred models.

Zephyr Built-in provider

The Zephyr Built-in provider is included with a paid Zephyr plan. It requires no API key and no external account. If your workspace has an active plan, the provider is available immediately.

If you do not have a Zephyr plan, connect any of the other providers with your own API key. See Connections for setup instructions.

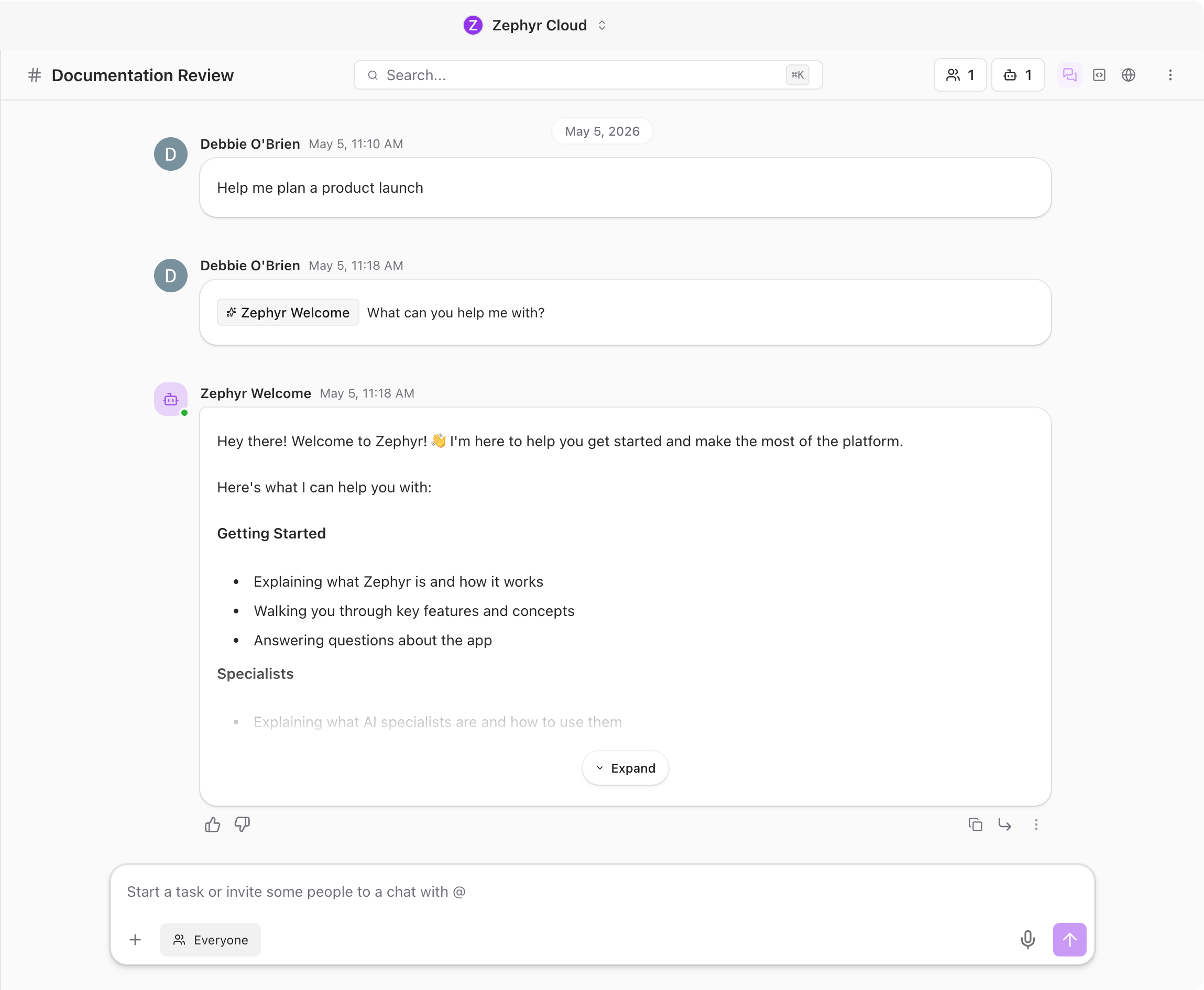

Chat

Conversations work like modern messaging apps.

Messages support rich formatting, file attachments (drag and drop or paste), and emoji reactions. Hover over any message to react, reply in a thread, copy it, or translate it.

Threads let you branch off from a message for focused discussion without cluttering the main channel.

Tool calls are visible in the chat. When a specialist uses a tool (searching code, reading a file, querying a database), you see what it did and can click to expand the details.

Search lets you jump to any conversation or person. Press Cmd+K (macOS) or Ctrl+K (Windows/Linux) to open it.

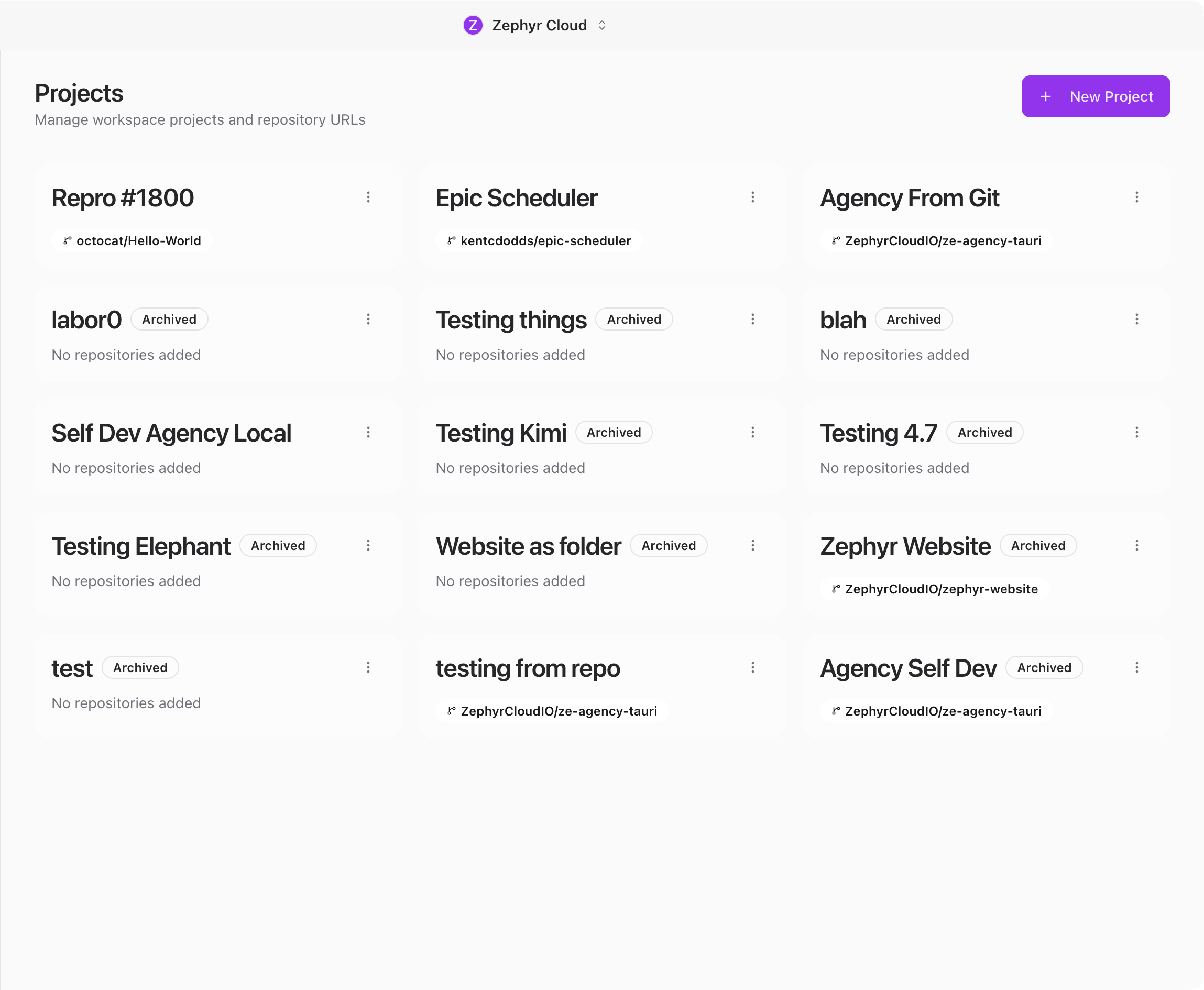

Projects

Projects bring together everything related to a piece of work: a GitHub repository for codebase context, channels for focused conversation, a task board for tracking work, and knowledge sources for reference material.

See the Projects guide to learn more.

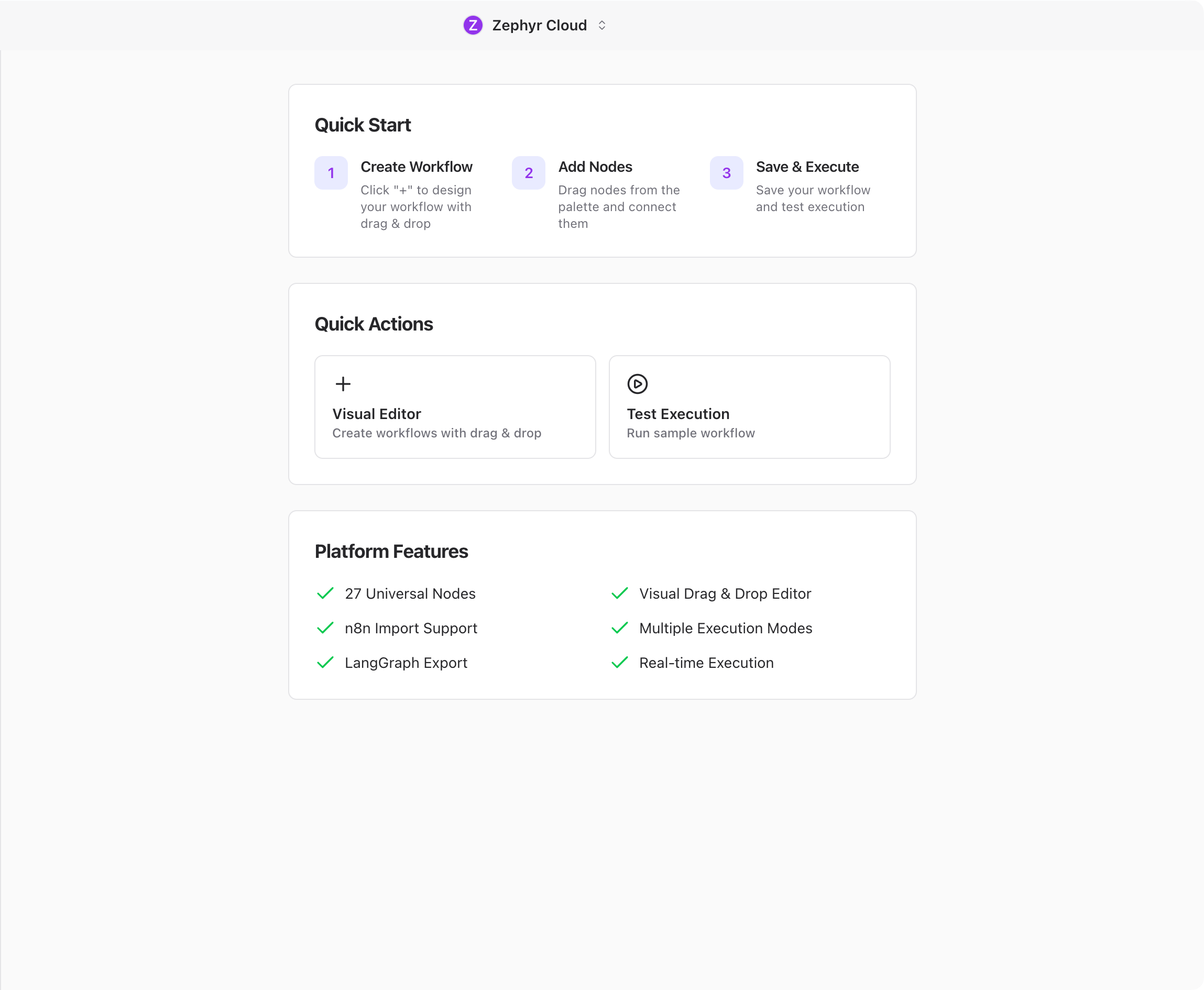

Workflows

The workflow editor lets you build visual automations. Drag nodes onto a canvas, connect them, and define triggers. Workflows can call APIs, transform data, invoke specialists, and send notifications.

See the Workflows guide to learn more.

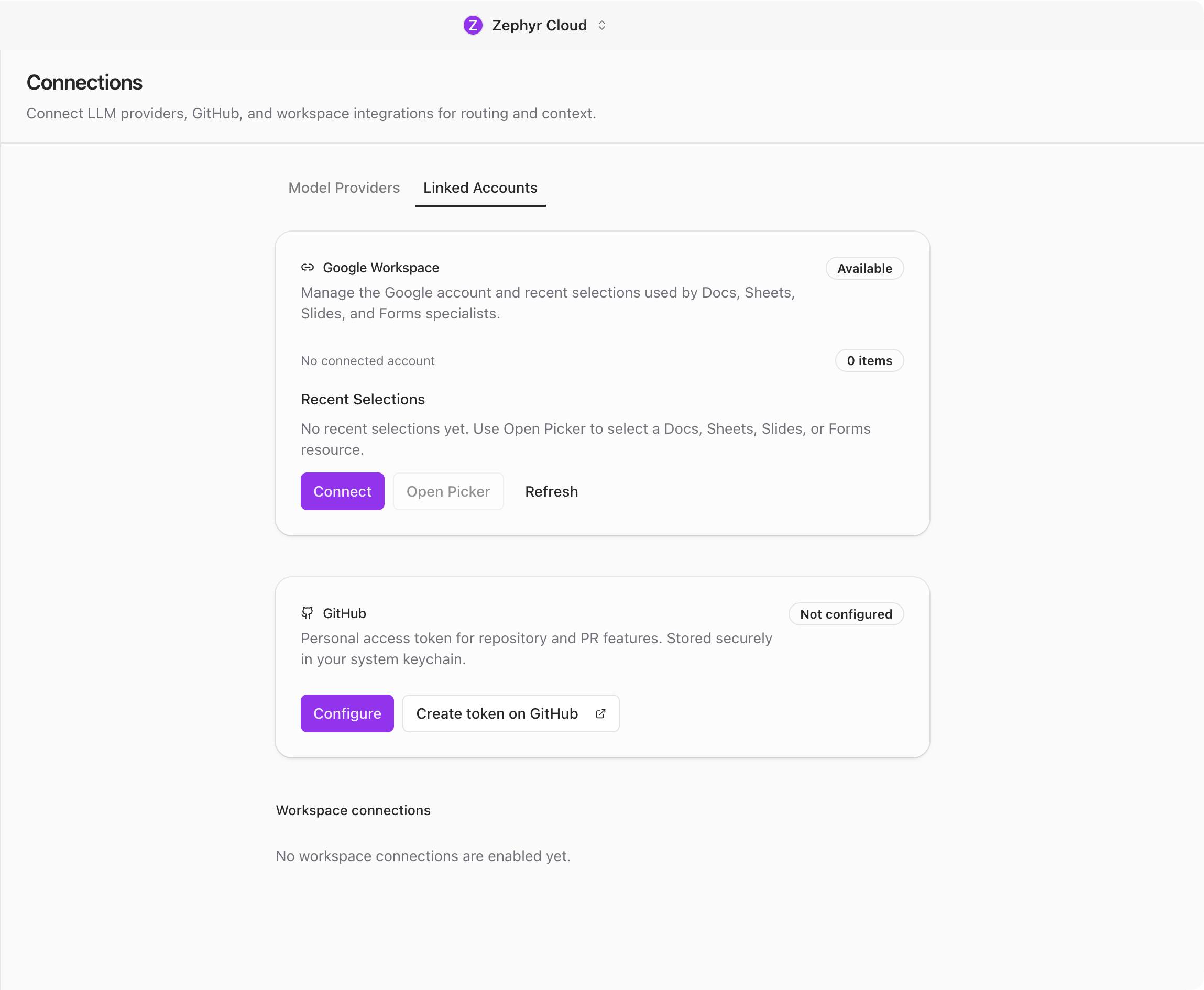

Connections

Specialists need at least one LLM provider configured before they can respond. You can also connect external services like GitHub, Slack, Notion, Figma, and Linear to give specialists context from your existing tools.

See Connections for the full setup.

What You Will Need

- A Mac (macOS 14 or later) or Windows computer

- An internet connection for sign-up and syncing

- An API key from an LLM provider, or an active Zephyr plan for the built-in provider

Next Steps

If you have not installed the app yet, head to Installation. Otherwise, dive into the section that matches what you want to do:

- Chat for messaging, threads, and the channel layout

- Specialists for browsing the marketplace and building your own

- Projects for connecting code repositories and giving specialists context

- Workflows for building visual automations

- Settings for account, workspace, and integration configuration