Builder Tab Details

Beyond the Persona tab, the specialist builder has four configuration tabs: Tasks & Workflows, Knowledge, Tooling, and Benchmarks. For the overall builder layout and flow, see Building Specialists.

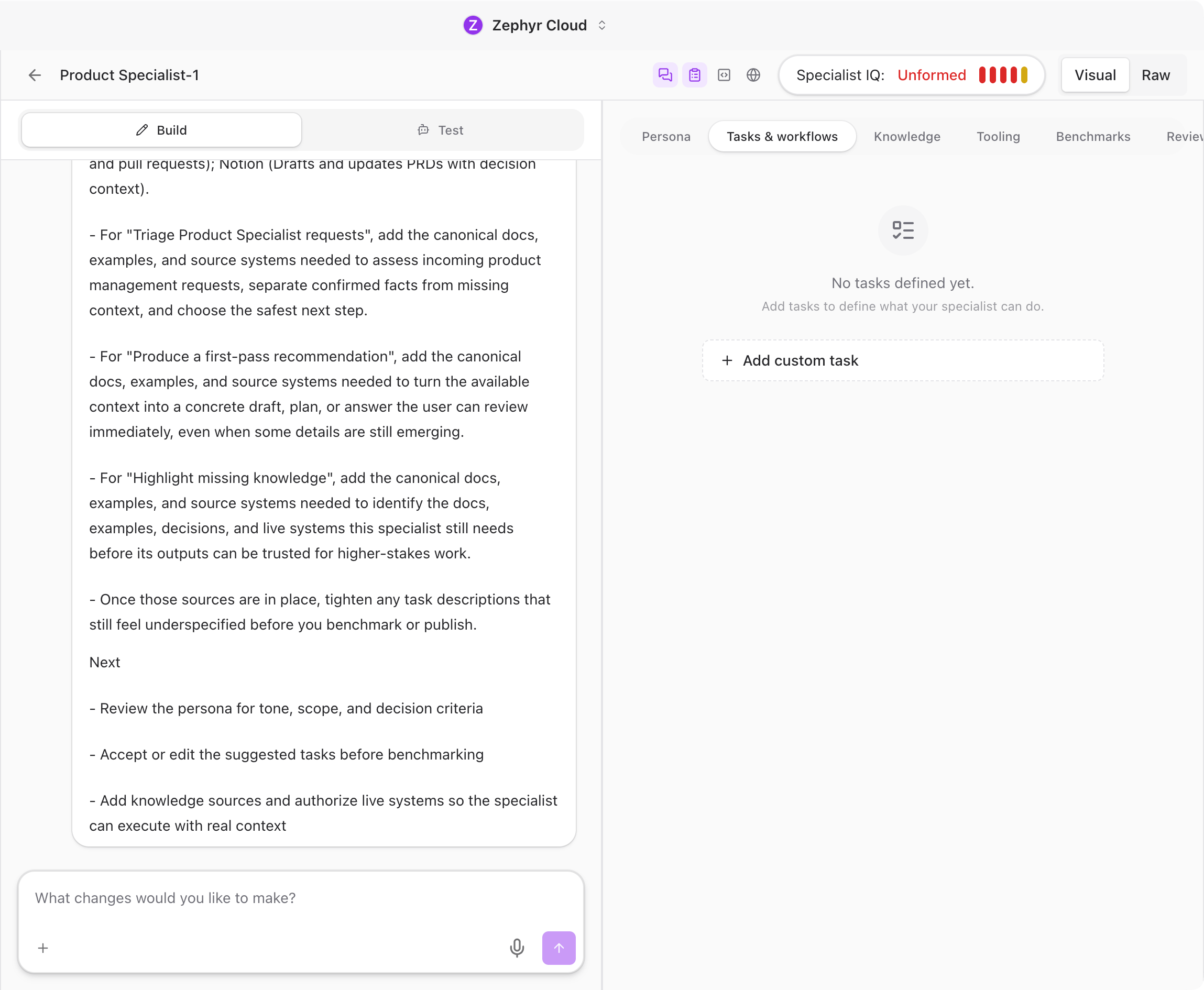

Tasks & Workflows

Tasks define what the specialist can do. The builder suggests tasks automatically based on the persona you configured, and you can accept, edit, or remove them. You can also add your own custom tasks.

Each task has:

- Title. A short label like "Code Review" or "Write Unit Tests."

- Key. A machine-readable identifier used internally.

- Description. A detailed explanation of what the task involves.

- Examples. Sample prompts that show how a user would trigger this task.

- Benchmark status. A badge showing whether the task has passing quality tests.

Tasks also connect to workflows. When a user's message matches a task, the specialist can automatically trigger an associated workflow.

Knowledge

The Knowledge tab is where you give the specialist access to documentation and reference material. Three source types are supported:

- URLs. Paste a web address and set a crawl depth from 1 to 5. The builder fetches the page and follows links up to the specified depth.

- Files and folders. Upload local files or select folders from the virtual file system.

- Google Drive. Connect a Google Drive account and select documents or folders.

After adding a source, the builder enriches it automatically. Enrichment includes generating summaries, evaluating tech stack relevance, and scoring how useful the source is for each task. Sources move through four statuses: Pending, Indexing, Indexed, and Error.

Tooling

The Tooling tab controls which tools the specialist can access when generating responses. There are five categories:

Built-in Agency Tools

A searchable catalog of tools provided by the platform. Browse the list and toggle individual tools on or off.

Tool Patterns

Define prefix-based patterns to enable groups of tools at once. For example, vfs_* enables all virtual file system tools. A live preview shows which tools match the current pattern.

MCP Servers

Attach existing Model Context Protocol servers or create new ones. MCP servers let the specialist call external APIs and services as part of its workflow.

MCP Tools

Enable specific tools from attached MCP servers. You can select individual tools by their namespaced identifier or use patterns to match groups.

Portable MCP Templates

Author reusable MCP server templates that can be installed into other specialists. Templates include server configuration, tool definitions, and connection details. You can create, install, and delete templates from this section.

A validation banner at the top of the Tooling tab shows real-time status. If there are configuration issues (missing servers, invalid patterns, unreachable endpoints), the banner highlights them.

Benchmarks

Benchmarks are automated quality tests that validate the specialist's behavior. The Benchmarks tab has four sub-sections:

Run

Execute benchmark suites against the specialist. Choose a suite, select a prompt level (L0 through Lx, where higher levels are harder), and pick a run mode:

- Specialist default. Uses the specialist's configured model and prompt.

- Model override. Uses a specific model you select.

- Raw prompt. Tests with a plain prompt, bypassing the specialist's system prompt.

A live progress indicator shows each scenario as it runs.

Optimize

Automatically improve the specialist using AI-driven research. The optimizer evaluates the specialist across 12 quality dimensions, generates candidate configurations within a budget you set, and presents results on a leaderboard. You can preview the diff between the current configuration and each candidate, then apply the best one with a single click.

Create

Build custom benchmark suites from scratch. Each suite contains scenarios, and each scenario includes:

- Multi-level prompts. Different phrasings at increasing difficulty.

- Assertions. Five assertion types to validate the response content.

- Validation commands. Optional shell commands that check response properties programmatically.

- Oracle answers. Reference answers the specialist's output is compared against.

Report Card

View the results of past benchmark runs. The report card shows an overall score, pass/fail counts, token usage, estimated cost, and tool call counts. You can compare two runs side by side and drill into per-scenario traces to see exactly where the specialist succeeded or failed.

Next Steps

- Building Specialists. Return to the builder overview for persona and publishing details.

- Working with Specialists. See how the finished specialist behaves in a real conversation.

- Model Overrides. Fine-tune which AI model powers the specialist.